We are standing at the edge of a new collaboration era.

Not the era where machines replace people.

Not the era where every person sits alone, prompting a private assistant in a private tab, creating private acceleration that nobody else can see, reuse, challenge, or trust.

The real opportunity is bigger and more human than that.

It is the moment when human intelligence and artificial intelligence begin to work together inside the same shared context.

A founder, a teammate, a customer, a designer, an engineer, an advisor, and an AI agent should not each hold a different fragment of the truth. The work should have a place where context lives, where decisions are visible, where artifacts can be shaped, where boundaries are respected, and where human judgment remains in authority.

That is Shared Intelligence.

Shared Intelligence is not a slogan for group chat with AI sprinkled on top.

It is a new operating layer for human work.

It is the shift from isolated intelligence to connected intelligence. From disposable prompts to durable context. From private acceleration to shared understanding. From AI as a tool outside the room to AI as a bounded participant inside the work.

If we design it well, this can become a golden age of human-AI collaboration.

But only if we are honest about what must be built.

The first AI wave made individuals faster

The first mass-market wave of generative AI was personal.

One person could draft faster, code faster, summarize faster, research faster, brainstorm faster, and prepare faster. That mattered. It still matters. Individual leverage is real.

But individual leverage is not the same as organizational intelligence.

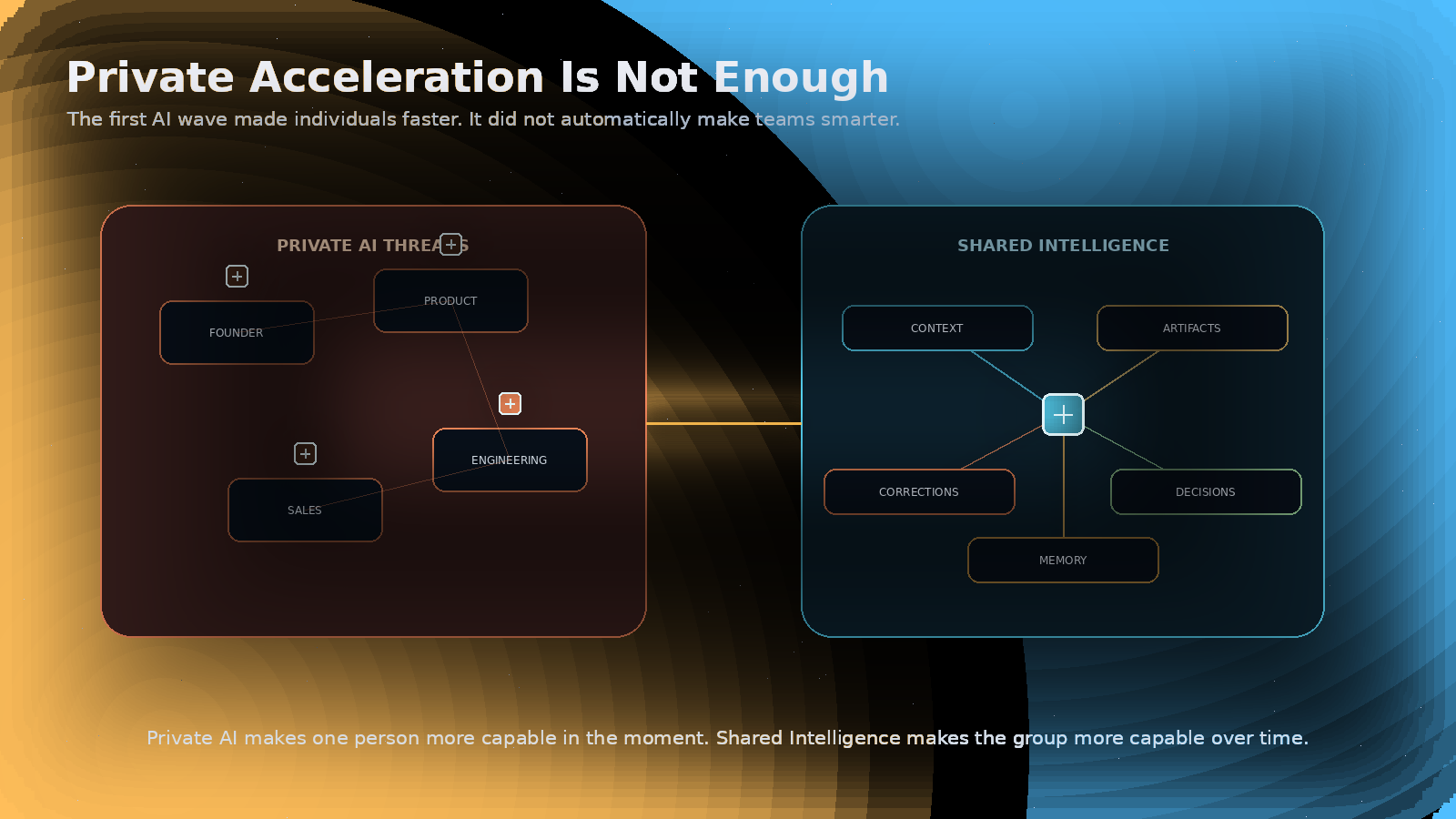

A team can have five people using AI every day and still be confused. The founder has one AI thread. Product has another. Engineering has another. Sales has another. Customer feedback sits in a CRM. The real decision happened in a meeting. The rationale is buried in Slack. The AI that produced the useful draft cannot see the correction that happened later.

Everyone moves faster.

The work still fragments.

This is the central failure of the first AI wave: it increases output before it fixes shared context.

Private AI makes one person more capable in the moment. Shared Intelligence makes the group more capable over time.

That difference is everything.

Private AI makes one person more capable in the moment. Shared Intelligence makes the group more capable over time.

Intelligence is not only inside the skull

Human intelligence has never been purely individual.

Language is shared intelligence. Writing is shared intelligence. Maps, calendars, contracts, schools, libraries, scientific journals, markets, operating procedures, and companies are all ways of extending human thought beyond one person.

For thousands of years, humanity has been getting thought out of the skull and into shared systems.

Writing let memory survive the body. Printing let ideas scale. Computers let symbols become interactive. Networks let information move instantly. AI now does something stranger: it brings synthesis, planning, language, simulation, and structured comparison into the workflow itself.

That does not make AI identical to human intelligence.

It does not give AI moral responsibility, lived experience, embodied wisdom, or human stakes.

But it does mean the boundary of practical intelligence is moving.

The important question is no longer only:

Can a machine produce an intelligent answer?

The better question is:

Can humans and AI systems become a more intelligent collaboration system together?

That is the question Shared Intelligence is trying to answer.

The missing layer is not the model. It is the collaboration layer.

Most AI debates still orbit the model.

Which model is smarter? Which one is cheaper? Which one has a larger context window? Which one writes better code? Which one passes more benchmarks?

Those questions matter, but they are not enough.

When AI participates in real work, the collaboration layer becomes decisive.

The collaboration layer answers the questions models do not answer by themselves:

- Who is involved?

- What is the shared goal?

- What context is allowed?

- What has already been decided?

- What is still uncertain?

- What can the AI do?

- What requires human approval?

- What should become an artifact?

- What should be remembered?

- What should be forgotten?

- What evidence remains behind?

Without that layer, AI creates impressive fragments.

With that layer, AI can help humans build continuity.

That is why Shared Intelligence is not just “better AI.” It is better collaboration architecture around AI.

The product is not the answer. The product is the loop.

In the old knowledge-work model, the unit of work was often the task.

Write the brief. Send the email. Create the ticket. Summarize the meeting. Draft the plan. Build the feature.

AI changes the shape of this.

The new unit of work is the loop.

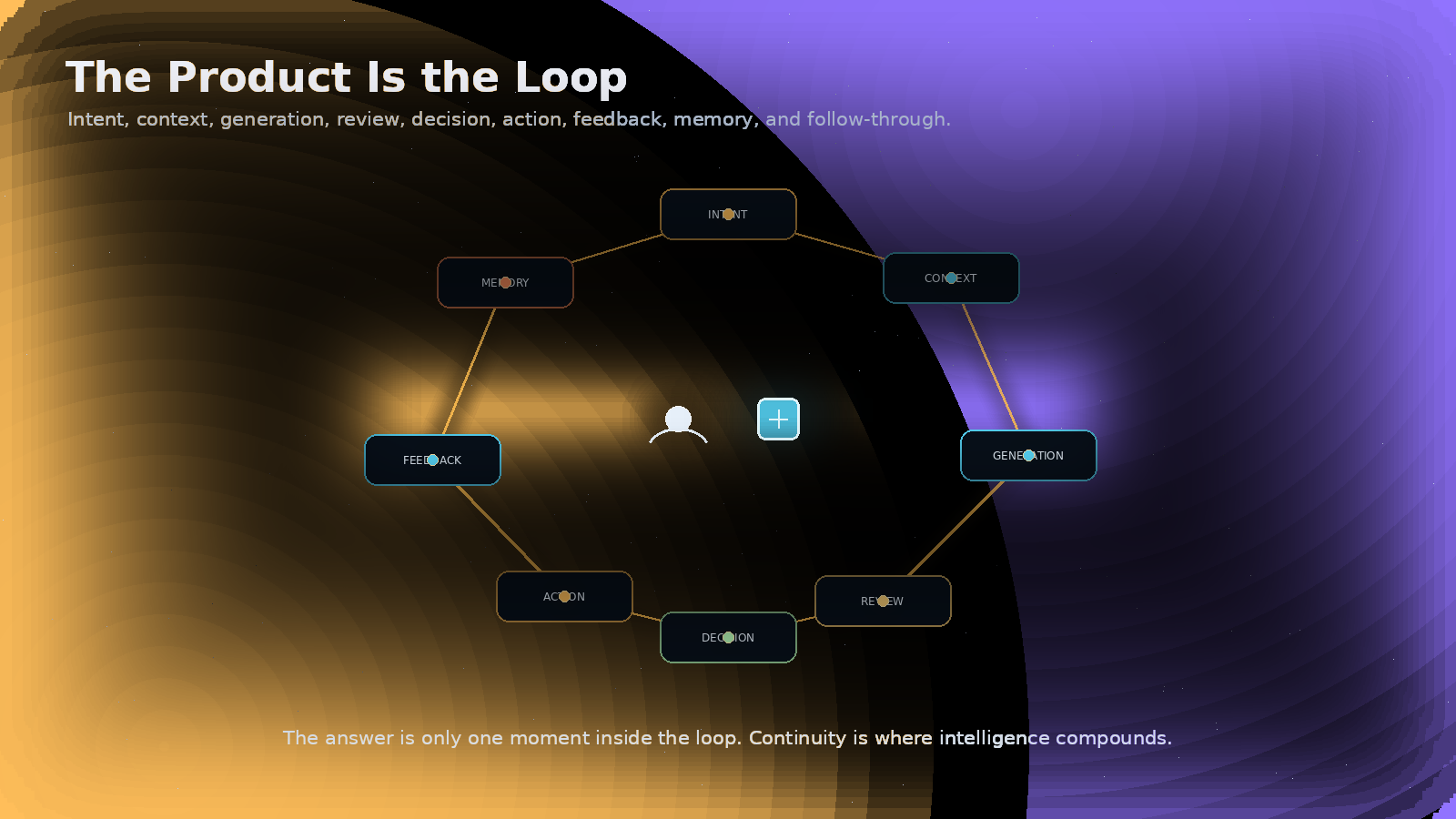

A loop includes intent, context, generation, review, decision, action, feedback, memory, and follow-through.

The answer is only one moment inside the loop.

A good AI answer that does not enter the right context is fragile. A good summary that no one trusts is noise. A great idea that cannot be connected to ownership, approval, evidence, and next steps is not yet work. A useful artifact that dies after being created is not intelligence compounding. It is output.

Shared Intelligence means designing the loop so humans and AI can keep improving the work together.

The value is not just faster drafts.

The value is better continuity.

The answer is only one moment inside the loop. Continuity is where intelligence compounds.

Conversation is where work begins

Most important work starts messy.

It starts as a conversation in a car, a late-night founder note, a customer call, a disagreement between product and engineering, a whiteboard session, a half-formed concern, or a moment of honesty that finally names what everyone has been avoiding.

Traditional software treats conversation as temporary.

Maybe it becomes a transcript. Maybe it becomes a summary. Maybe someone copies a few bullets into a document. Usually, most of the intelligence disappears.

That is backwards.

Conversation is not the prelude to the work.

Conversation is the work beginning to take shape.

The best ideas are often born before they are clean. The best decisions often emerge through tension. The most important context is often carried in tone, sequence, objection, hesitation, and correction.

A Shared Intelligence system should help people turn chosen parts of conversation into living threads, durable artifacts, and responsible follow-through without pretending that every private moment should be captured.

Not surveillance.

Not endless recording.

Permissioned continuity.

That is the breakthrough.

Memory must be chosen, not extracted

AI memory is powerful.

AI memory is also dangerous if handled lazily.

A system that forgets everything forces humans to rebuild context forever. A system that remembers everything becomes creepy, noisy, and untrustworthy. The right answer is not total amnesia or total capture.

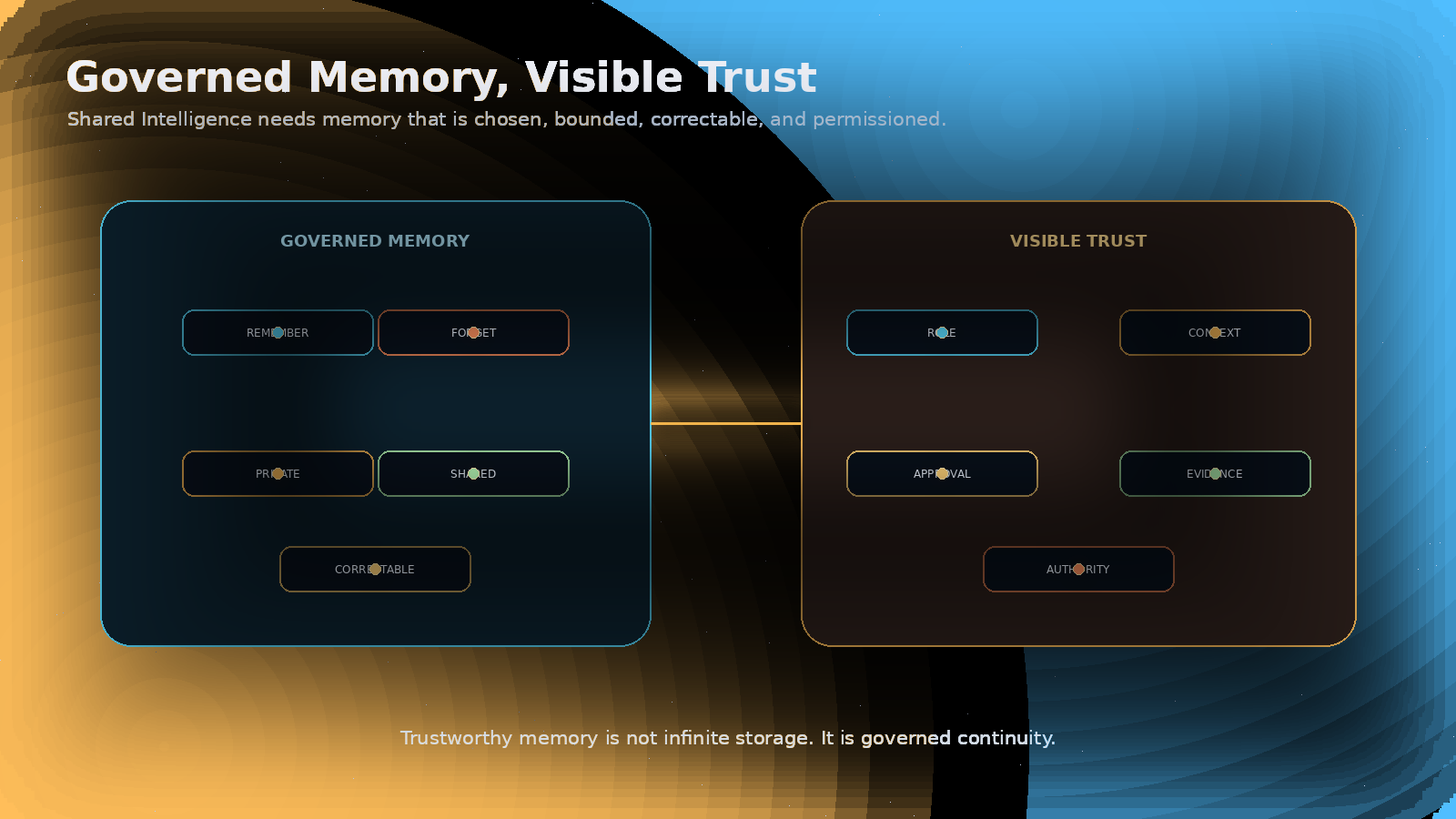

The right answer is governed memory.

Humans need practical controls:

- remember this

- forget this

- keep this private

- share this with the room

- turn this into an artifact

- turn this into a decision

- correct this going forward

- do not use this outside this context

Shared Intelligence should not mean accumulating data until the system becomes opaque.

It should mean accumulating understanding with boundaries.

The memory that matters is not just what was said. It is what was decided, why it was decided, who approved it, what changed later, and what should guide the next move.

That is how memory becomes trust.

Trustworthy memory is not infinite storage. It is governed continuity.

Agents need roles, not mystique

The word “agent” is already getting abused.

Some people use it to mean any AI that can call a tool. Some use it to mean autonomous software. Some use it to mean a persona. Some use it to mean a worker replacement. Some use it because it sounds futuristic.

In Shared Intelligence, an agent should be less mystical and more accountable.

An AI participant needs a role.

A summarizer is not an approver. A researcher is not a decision-maker. A drafting agent is not a legal authority. A code assistant is not a production release owner. A customer-support agent should not improvise commitments beyond policy. A planning agent should not silently turn speculation into execution.

Role clarity is not bureaucracy.

It is what makes AI participation useful enough to trust.

A shared workspace should make the agent’s role visible:

- what it is helping with

- what context it can use

- what it can suggest

- what it can change

- what requires approval

- what evidence it leaves behind

- how humans can correct it

The future is not one giant assistant floating above every task.

The future is many bounded AI participants working inside human-owned context.

Human authority must remain real

“Human in the loop” is not enough.

A human can be technically present and still not be meaningfully in control.

If the person does not have context, timing, authority, and the ability to correct or stop the system, then human oversight becomes approval theater.

Shared Intelligence should keep humans in authority, not in drudgery.

That distinction matters.

The goal is not to make people manually inspect every generated sentence, every suggested task, and every possible action. The goal is to put human judgment where it matters most: framing, direction, exception handling, approval, relationship, ethics, taste, and responsibility.

AI should carry more of the coordination burden.

Humans should carry the authority.

The loop should be designed around that fact.

The idea economy needs shared intelligence

AI makes execution easier to initiate.

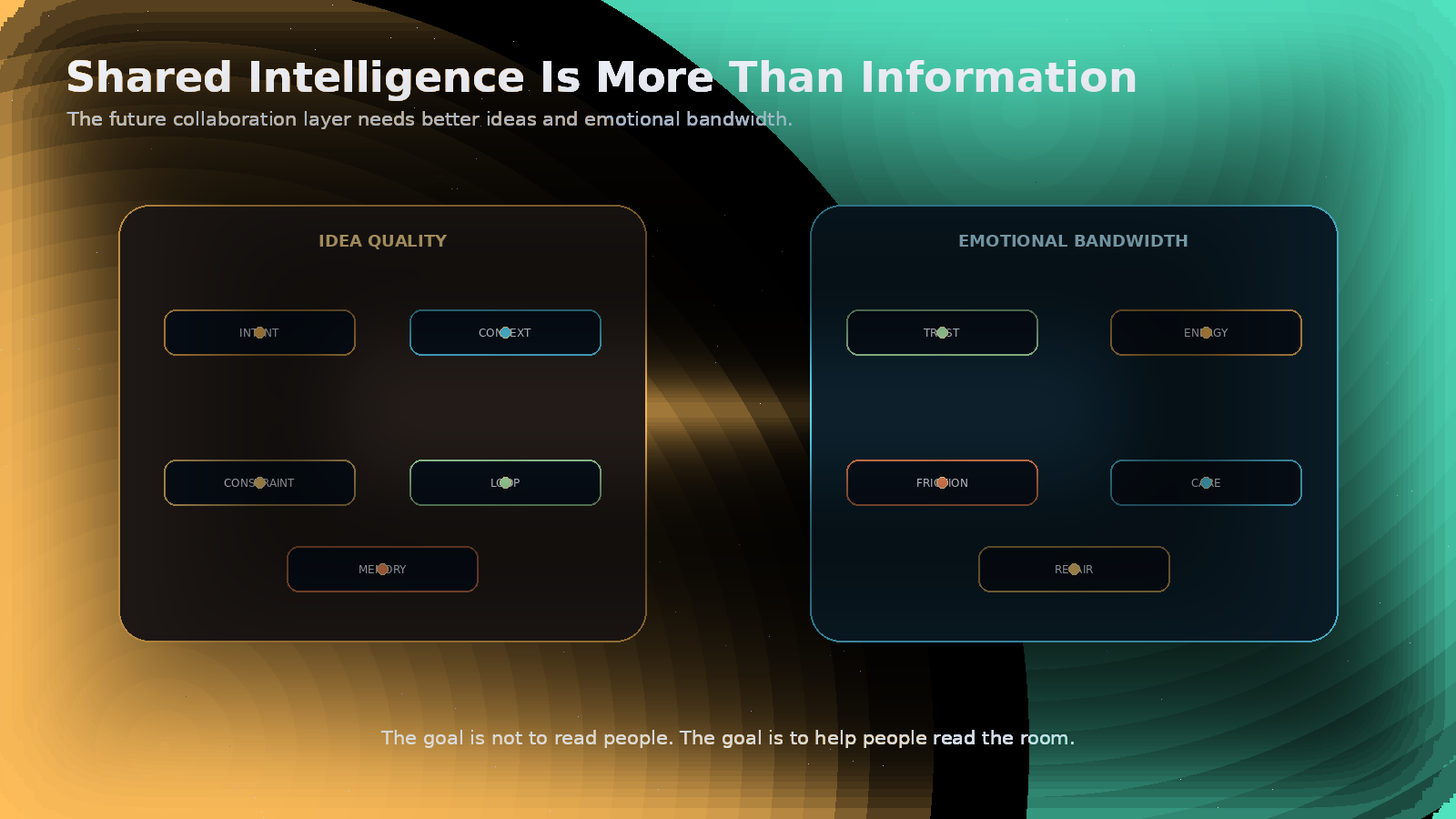

That means the quality of the originating idea matters more, not less.

A vague idea can now become a landing page, a prototype, a sales sequence, a legal draft, a financial model, or a customer workflow very quickly. That is exciting. It is also risky.

The difference between “build a health tracker” and “build a secure health tracker” is not cosmetic. One missing word can change the privacy model, the authentication path, the data boundary, the review loop, and the human consequences.

As output becomes abundant, value moves upstream.

The scarce input becomes the quality of framing: intent, context, constraint, loop, and memory.

Shared Intelligence gives ideas a place to improve before they scale.

A good shared workspace should help a team ask:

- Are we solving the right problem?

- What assumption is missing?

- What constraint should be explicit?

- Who needs to review this?

- What would make this safe enough to act on?

- What should carry forward after we learn?

The next advantage will not belong to whoever generates the most.

It will belong to the teams who can originate, refine, remember, and carry better ideas forward together.

Emotional bandwidth is part of intelligence

A collaboration system that only optimizes for information will misunderstand humans.

People are not machines attached to keyboards. Work succeeds or fails through trust, energy, motivation, fear, resentment, hope, tension, fatigue, pride, care, and repair.

AI should not become emotional surveillance.

It should not claim to know the inner truth of a person from a facial expression, sentence fragment, delay, or tone. That is overreach.

But AI can help humans communicate with more care.

It can help a founder write with urgency without panic. It can help a manager acknowledge effort before asking for more. It can help a team notice unresolved tension. It can help turn frustration into a named blocker. It can help protect enthusiasm by turning excitement into a credible next step.

The goal is not to read people.

The goal is to help people read the room.

Shared Intelligence needs both analytical intelligence and emotional bandwidth.

The future of collaboration will need both analytical strength and emotional responsibility, or it will fail.

The goal is not to read people. The goal is to help people read the room.

The enemy is faster fragmentation

The wrong future is easy to imagine.

Every person has their own AI. Every department has its own agents. Every tool generates output. Every workflow has automation. Every meeting has a transcript. Every document has a summary. Every system claims to remember.

And somehow nobody knows what is true.

That is not intelligence.

That is fragmentation at machine speed.

The enemy is not AI.

The enemy is private acceleration without shared context, automation without authority, memory without consent, output without review, and speed without responsibility.

Shared Intelligence is the counterforce.

It says: keep the humans together. Keep the AI inside the context. Keep the source context and tradeoffs attached to the artifact. Keep the decision visible. Keep the boundary clear. Keep the memory governed. Keep the work continuous.

That is how speed becomes intelligence instead of chaos.

The golden age will not be automatic

There is no guarantee that AI produces a better human future.

Human-AI collaboration does not work by magic. Research already shows that human-AI combinations are not automatically better than the best human or AI alone. The gains depend on the task, division of labor, system design, and how people actually use the technology.

That should make builders humble.

Adding AI to a workflow is not a strategy.

Designing the collaboration is the strategy.

The golden age will belong to people and organizations that redesign work around shared context, human authority, and intelligent loops.

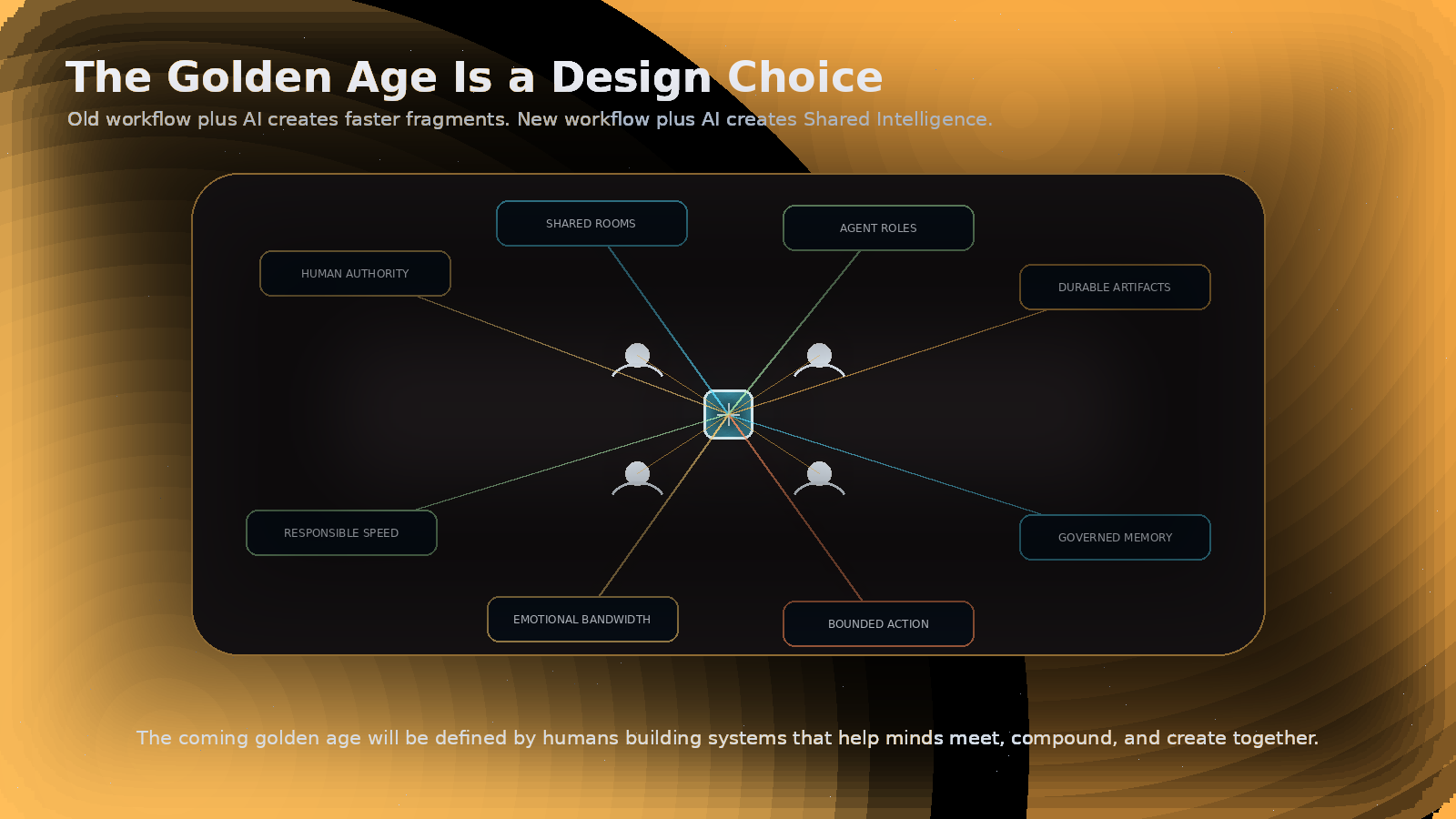

Old workflow plus AI creates faster fragments.

New workflow plus AI creates Shared Intelligence.

What Sociail is being built toward

This is the future Sociail is being built toward.

Not another chatbot.

Not another private productivity hack.

Not an agent platform that pretends humans are obsolete.

Sociail is being built as a shared AI workspace where people and AI participants can work from the same context, shape durable outputs, and prepare bounded follow-through with visible human authority.

The Early Access product is narrower than the full vision. It has to be. Serious products earn their way forward.

But the direction is clear:

- shared rooms instead of private AI tabs

- room-aware agents instead of isolated prompts

- durable artifacts instead of disposable chat output

- visible trust boundaries instead of invisible automation

- governed memory instead of accidental forgetting or reckless capture

- human-owned follow-through instead of autonomous chaos

That is the practical foundation of Shared Intelligence.

The manifesto

We believe intelligence is becoming collaborative by default.

We believe AI should amplify human agency, not erase it.

We believe the next collaboration layer will be built around shared context, not private tabs.

We believe conversation is a first-class work surface.

We believe memory must be permissioned, bounded, and correctable.

We believe agents need roles, limits, and visible authority.

We believe trust belongs in the product experience, not only in policy documents.

We believe emotional context is part of collaboration, and must be protected rather than exploited.

We believe better ideas need better loops before they become faster execution.

We believe humans should remain responsible for direction, judgment, ethics, taste, relationships, and final authority.

We believe AI can carry more of the coordination burden so humans can do more of the human work.

We believe the next great products will not simply answer questions. They will help people and AI think, decide, create, remember, and act together.

We believe Shared Intelligence can become a golden age of human-AI collaboration if it is built with courage, restraint, and respect.

The coming golden age will be defined by humans building systems that help minds meet, compound, and create together.

Think better, together

The future should not be millions of isolated people whispering prompts into machines.

The future should be humans thinking together with AI in the room, without losing the thread.

A team should be able to return to the source context and tradeoffs behind a decision.

A founder should be able to turn a messy conversation into a living strategy.

An operator should be able to see what changed, who approved it, what the AI suggested, and what still needs judgment.

A customer conversation should become context the team can learn from.

An artifact should not die after it is created.

A correction should improve the next loop.

A room should become smarter because the people and AI inside it keep learning together.

That is Shared Intelligence.

Not artificial intelligence alone.

Not human intelligence alone.

A new collaboration layer where people and AI systems can work from shared context, with durable memory, visible trust, and human authority.

This is not the end of human work.

It is the beginning of better human work.

The coming golden age will not be defined by machines replacing minds.

It will be defined by humans finally building the systems that help minds meet, compound, and create together.

That is the future I am building toward.

And that is the promise of Shared Intelligence.

Continue the reading path

- The Conversation Is the Product

- The Productivity Singularity Is a Workflow Problem

- What Trustworthy AI Collaboration Looks Like

- Shared Intelligence Is the Second Brain

- Shared Intelligence Needs Emotional Bandwidth

Further reading

- Alan Turing: Computing Machinery and Intelligence

- Dartmouth: Artificial Intelligence coined at Dartmouth

- J. C. R. Licklider: Man-Computer Symbiosis

- Douglas Engelbart: Augmenting Human Intellect

- Nature Human Behaviour: When combinations of humans and AI are useful

- Microsoft Work Trend Index 2026: Agents, human agency, and the opportunity for every organization

- NIST AI Risk Management Framework

- EU AI Act Article 14: Human oversight